This topic is only relevant for users, running the compiled version of unbound. All files, required can be found on GitHub.

The unbound documentation has a section "Cache DB Module Options".

This section covers the use of a Redis backend.

This setup requires unbound to be compiled with the options "with-libhiredis" and "enable-cachedb", which requires the installation of libhiredis-dev (sudo apt-get install libhiredis-dev).

The script on GitHub (/home/pi/compile_unbound.sh) has all the necessary commands to install and configure the redis-server and compile unbound with the required options. Here is the reference to the Redis configuration changes, the script makes (sed commands). These changes are also recommended in the unbound documentation (the Redis server must be configured to limit the cache size, preferably with some kind of least-recently-used eviction policy).

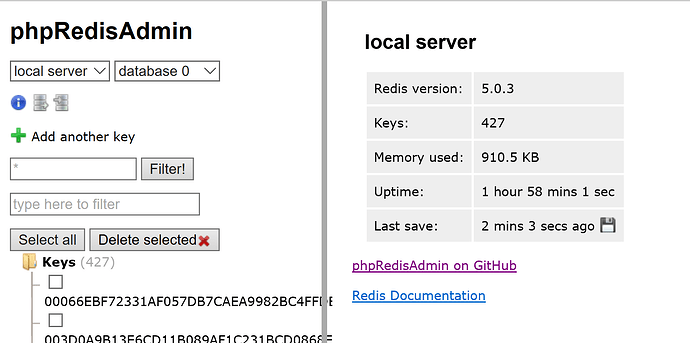

As usual, the Raspbian version of Redis isn't the latest (cuurent stable is 5.0.5), but since the server is for internal use only, I can live with that.

Finally, unbound needs to be configured to actually use the backend. This is achieved by the configuration file /etc/unbound/unbound.conf.d/redis.conf (see github).

After compiling unbound and making the necessary configuration changes, you can verify it all works, by looking at the Redis data, using a web interface. To add this web interface to your lighttpd configuration, simply run the script /home/pi/install_phpRedisAdmin.sh, restart lighttpd and browse to http://<pihole_IP_address>/phpRedisAdmin/

Result:

- I can verify the Redis backend is actually receiving entries, by looking at the web interface. Although the data isn't really readable, you can almost always identify the domain.

- The unbound log doesn't appear to indicate where the data (IP address) is coming from (cache, Redis backend or lookup). Any ideas here?

Screenshot of phpRedisAdmin

Please discuss pro / con of this setup, if interested.