Reading what you said here about the non-capturing groups, I feel reminded about that I wanted to extend our regex documentation.

As you've read in the other (two years old) thread, the Regex engine inside FTL is a POSIX-compliant ERE-like (extended regular expressions) regex engine. We started at some point with BRE (basic regular expressions) but switched to ERE-like later as BRE is now considered obsolete.

Why do I say "ERE-like"? Well, because ERE does not specify some useful things (such as said back-references \1 - \9) that were actually specified in BRE so it makes sense to slightly deviate from the standard and accept them (this isn't a breaking change).

Let me try to summarize below what the regex engine of FTL can do what is (currently) missing in the cheat sheet in the documentation (some parts are mentioned elsewhere on our regex pages). You'll see that it's not much that is missing.

Back references

\[1-9]

A back reference is a backslash followed by a single non-zero decimal digit n. It matches the same sequence of characters matched by the n-th parenthesized subexpression.

Hexadecimal literals

A literal can either be a ordinary character/digit, an escape sequence (\n, \t, ...) or a hex-encoded string starting in \x such as \x1B. However, I do not see much/any application in the context of domains. Even not when considering international domains with special characters as they should be using Punycode-encoding.

Assertion characters

Besides the well known "anchors" ^ and $ matching the start and end of the input, respectively, we also support the following assertion characters:

\< – Beginning of word\> – End of word\b – Word boundary\B – Non-word boundary\d – Digit character (equivalent to [[:digit:]])\D – Non-digit character (equivalent to [^[:digit:]])\s – Space character (equivalent to [[:space:]])\S – Non-space character (equivalent to [^[:space:]])\w – Word character (equivalent to [[:alnum:]_])\W – Non-word character (equivalent to [^[:alnum:]_])

Non-greedy matching

Normally a repeated expression is greedy, that is, it matches as many characters as possible:

(matching 0 or more)*

(matching 0 or 1)?

(matching 1 or more)+

(matching n times){n}

(matching n or more times){n,}

(matching n to m (inclusive) times){n,m}

Simple example for greedy matching ("eating as much as possible"):

regex: (.*)ab

input: bcdabdcbabcd

^^^^^^^^ab

match: bcdabdcb

Adding a ? to a repeat operator (such as ? or *) makes the subexpression minimal, or non-greedy. A non-greedy subexpression matches as few characters as possible:

(matching 0 or more)*?

(matching 0 or 1)??

(matching 1 or more)+?

(matching n times){n}?

(matching n or more times){n,}?

(matching n to m (inclusive) times){n,m}?

Simple example for non-greedy matching ("eating as little as possible"):

regex: (.*?)ab

input: bcdabdcbabcd

^^^ab

match: bcd

Note that this does not (always) mean the same thing as matching as many or few repetitions as possible. Also note that minimal repetitions are not supported for approximate matching due to performance reasons.

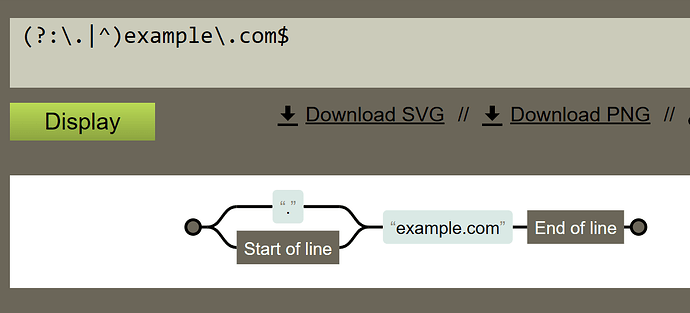

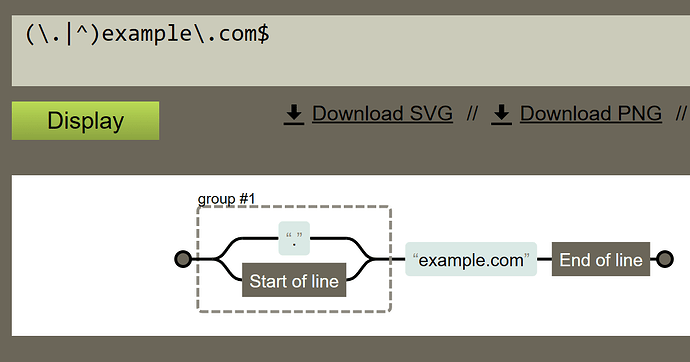

Non-capture groups

Non-capturing groups give some higher performance as we do not have to create a matching record for them. However, be aware of possible caveats, e.g. "normal" groups within non-capturing groups still capture. Example: (?:([A-Za-z]+):) will match, for instance, "ftp:", however, even when the entire string is matched by a non-capturing group, the inner group will still return the string "ftp" for "\1".

Options

Finally, you can specify some options for the regex such as disabling the standard case-insensitive matching. This shouldn't be done, typically, for domains, however, if there are reasons you really really want to do this, you can by prepending your regex with (?-i) as in

(?-i)intENtional CASe-senSItive matCHING

Other supported options are (?U) forcing the repetition operators in your regex to be non-greedy unless a ? is appended, and (?r) causing the regex to be matched right associative rather than the default left associative manner.

Mind that these options have a higher chance to hurt than to actually help you (unless you know exactly what you are doing with them).