I ended up on a fake netflix site on a .top domain so looked for any references for most malicious TLDs by volume and found a couple of useful references. Links, and resulting blacklist regex, below.

^.*\.(surf|casa|live|icu|gq|info|ml|tk|cam|top)$

^.*\.(zw|bd|ke|am|sbs|date|pw|quest|cd|bid|ws|ga|cf)$

Sensitive TLDs – (still on previous link)

^.*\.(casino|xxx|poker|porn|bet|sex|sexy|adult|webcam)$

Thank you for sharing this.

A word of caution for casual by-passers:

As always with any blocklists, you should be aware of potential overblocking when blindly applying any available lists you come across.

As an example, my favourite DynDNS provider also offers .icu domains.

So if I'd just added above regex without adequate scrutiny, I may have shut myself off from my DynDNS domains.

1 Like

Interested in blocking TLDs. I was reading this reddit article (newly registered domains) and ended up on github, where I found a script to retrieve them. I used this to create an RPZ (response policy zone), daily refreshed, but soon found out even a seven (7) day list contains a massive number of entries (today 691913 entries), which implies unbound, which I use to block RPZ entries, needs a lot of memory.

I than started looking for a way to shorten the list. Ended up with extracting the TLD from every domain in the list, even in a single file (2022-10-03.domains) there are 412 unique TLDs.

I thus conclude blocking the TLDs, as mentioned by the articles in the first post isn't going to be efficient enough, a lot more is required...

To give you an idea of the TLDs that came out of a single day "nrd" list, I've included a zip.

nrd-TLDi.zip (554.2 KB)

It's worth keeping a defined end result in mind when implementing security measures, and balancing the cost of those measures against the cost of not having them. The TLD regexes are a low-cost approach to avoid accidentally being caught out by a site on what Spamhaus and Palo Alto are seeing as the most commonly abused TLDs.

If you use them then chances are you'll need to omit some or whitelist some domains and taylor to your needs. I've whitelisted a couple of .info domains since, and if I get any more I'll just remove .info from the regex. On the other hand every occurence I've seen of .top has been in a malicious context, so those can stay for now.

In comparison, blocking newly registered domains on an individual domain basis feels extremely costly – to keep on top of the sheer numbers, the processing needed to keep Pi-hole up to date, and the fact that the purpose of the domains is unknown and cannot be determined from their newly registered status. It feels like you'll always be behind catching up for the sake of it, and not supporting any defined end result.

Worth mentioning that good security practice is just as important and varies according to specific needs, and generally speaking that means keep systems and devices up to date, use a decent password manager and 2FA on accounts (physical key like YubiKey for important accounts), don't click usolicited links, pause and think before responding to requests, keep your browser(s) up to date and avoid all but very trusted extensions, and have good backups of your valuable data.

For the most part, I agree with your statements, just sharing some of my findings.

- In the above zip file, you could see there are 412 TLDs (extracted from the downloaded file)

- Thanks to the great work of the developers, the query database now contains an entry for every domain you ever visited (pihole-FTL.db / domain_by_id table / domain). Using the same method to extract the TLD (parameter substitution), it's easy enough to get the TLDs you have used (number depends on the database setting MAXDBDAYS).

- It is than equally easy to make the diff between the TLDs from the nrd file and the TLDs that have been used, result:

410 392 (script error) out of 412 TLDs in the nrd file have never been used.

I agree that the load on the pihole would be high, to keep this up to date, however, I'm not using pihole to block these TLDs (no regexes), considering (testing) 2 options:

-

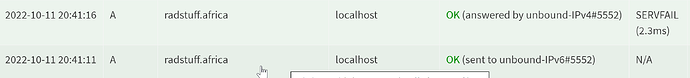

use an RPZ (unbound), downside is the memory usage, only blocked for a limited time (number of days in the script), the TLD isn't blocked, only the specific domain. Upside is that other domains under the TLD are allowed, pihole reports (no delay):

-

use suricata (running on the pfsense firewall) to reject queries to specific TLDs, downside is that the first query to a domain with a TLD that has a suricata rule defined takes a long(er) time to timeout and the entire TLD is blocked. In this scenario, the suricata rule looks like (copied an existing rule; on the pfsense forum, I've been advised to use a sid starting with 1000xxx to avoid conflicts with commercial rules, changed alert into reject):

reject dns any any -> $EXTERNAL_NET 53 (msg:"ET DNS Query for .africa TLD"; dns.query; content:".africa"; endswith; fast_pattern; classtype:bad-unknown; sid:1000001; rev:1; metadata:affected_product Any, attack_target Client_Endpoint, created_at 2022_10_11, deployment Perimeter, former_category DNS, signature_severity Minor, updated_at 2022_10_11;)

and pihole reports

All thoughts are welcome...

I've just replaced my TLD regexes in Pi-hole with one that covers a small number of TLDs that I see repeatedly used in a bad way. I'll tweak it now and again. That'll do for me.

And the regexes (TLDs) that you use now are?

A subset of the ones at the start of this thread plus a couple of others.