Hi

Please can someone add the Cryptojacking Campaign - Drupal Sites, to the main pi-hole block list repository.

Link Drupal Cryptojacking Campaigns -- Affected Sites - Google Sheets …

Thanks

Hi

Please can someone add the Cryptojacking Campaign - Drupal Sites, to the main pi-hole block list repository.

Link Drupal Cryptojacking Campaigns -- Affected Sites - Google Sheets …

Thanks

I took the hosts from the lists above and placed them on this pastebin.

It will never expire so go ahead and use it as an additional list.

https://pastebin.com/raw/a1TPEPfP (manually updated)

I signed up for updates from that list, so once it gets updated I'll update the pastebin too (i’ll still maintain pastebin for the ones using it).

L.E.

you can also use @deHakkelaar’s list:

http://dehakkelaar.nl/lists/cryptojacking_campaign.list.txt

It auto updates itself. Simply add it to the block lists in your pi-hole and it will work.

That's great thanks

EDIT: Read down posts below this one for updates!!!

Put it in CRON like so:

sudo mkdir /var/www/html/lists

echo $((RANDOM % 60)) "2 * * * root curl -s 'https://docs.google.com/spreadsheets/d/14TWw0lf2x6y8ji5Zd7zv9sIIVixU33irCM-i9CIrmo4/export?format=csv&id=14TWw0lf2x6y8ji5Zd7zv9sIIVixU33irCM-i9CIrmo4&gid=0' | awk -F , '{print $1}' | tail -n +2 | tee /var/www/html/lists/cryptojacking_campaign.list.txt 2>&1 > /dev/null && curl -s 'https://docs.google.com/spreadsheets/d/14TWw0lf2x6y8ji5Zd7zv9sIIVixU33irCM-i9CIrmo4/export?format=csv&id=14TWw0lf2x6y8ji5Zd7zv9sIIVixU33irCM-i9CIrmo4&gid=1317599353' | awk -F , '{print $1}' | tail -n +2 | tee -a /var/www/html/lists/cryptojacking_campaign.list.txt 2>&1 > /dev/null" | sudo tee /etc/cron.d/cryptojacking_campaign

sudo service cron reload

When CRON runs between 2 and 3 AM (randomized), the generated list will be written locally to "/var/www/html/lists/cryptojacking_campaign.list.txt"

To create the list immediately instead of waiting for CRON to run:

curl -s 'https://docs.google.com/spreadsheets/d/14TWw0lf2x6y8ji5Zd7zv9sIIVixU33irCM-i9CIrmo4/export?format=csv&id=14TWw0lf2x6y8ji5Zd7zv9sIIVixU33irCM-i9CIrmo4&gid=0' | awk -F , '{print $1}' | tail -n +2 | sudo tee /var/www/html/lists/cryptojacking_campaign.list.txt 2>&1 > /dev/null && curl -s 'https://docs.google.com/spreadsheets/d/14TWw0lf2x6y8ji5Zd7zv9sIIVixU33irCM-i9CIrmo4/export?format=csv&id=14TWw0lf2x6y8ji5Zd7zv9sIIVixU33irCM-i9CIrmo4&gid=1317599353' | awk -F , '{print $1}' | tail -n +2 | sudo tee -a /var/www/html/lists/cryptojacking_campaign.list.txt 2>&1 > /dev/null

On the Pi-hole admin page you can add below link as a list:

http://localhost/lists/cryptojacking_campaign.list.txt

http://dehakkelaar.nl/lists/cryptojacking_campaign.list.txt

It syncs with the google sheet every hour as the list is still growing

... for the lazy ones

@Ukruler54321, maybe you could mention the source in your first posting:

I added the https://pastebin.com/raw/a1TPEPfP URL to adlists.list, which is in /etc/pihole will this do the trick?

That will do the trick.

If you run below one, the added URL will be imported into Pi-hole:

pihole -g

But I offered two automated alternatives as to relieve @RamSet from having to manually update that pastebin list whenever the google sheet gets updated.

If you dont want to setup cron like described above, you can use the link I provided as a list instead of the pastebin one:

http://dehakkelaar.nl/lists/cryptojacking_campaign.list.txt

And you dont need to edit the "adlists.list" file manually.

Instead use the web GUI to add the URL to the lists:

I updated the Pastebin list (and added the new host they added unders the second tab)

@deHakkelaar they started adding hosts to the second tab of the excel file.

You should update your contab to grab from there also

I have deleted https://pastebin.com/raw/a1TPEPfP link from adlists.list.

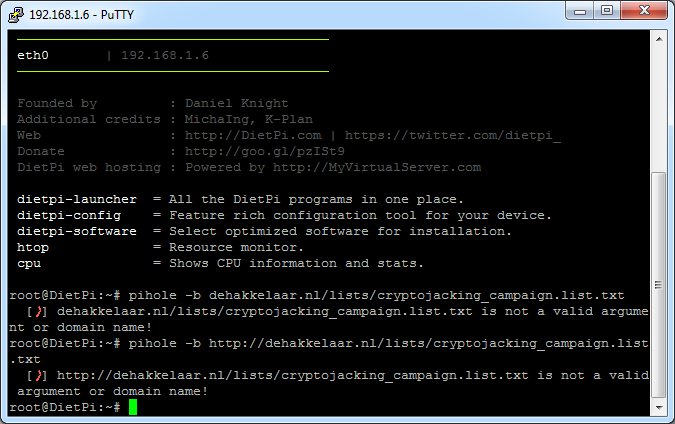

Then added dehakkelaar.nl/lists/cryptojacking_campaign.list.txt to the blocklist using the following command.

The terminal displays the "is not a valid argument or domain name!"

pihole -b adds a domain to the blacklist. It's not the correct way to add it to your ad lists.

sudo nano /etc/pihole/adlists.list

add http://dehakkelaar.nl/lists/cryptojacking_campaign.list.txt

at the end, save and run a

pihole -g

That should get you there

I updated the cron instructions above, the cron line is a bit long now ![]()

and below list is generated every hour pulling in both sheets now:

http://dehakkelaar.nl/lists/cryptojacking_campaign.list.txt

pi@noads:~ $ curl -s http://dehakkelaar.nl/lists/cryptojacking_campaign.list.txt | wc -l

393

pi@noads:~ $ curl -s http://dehakkelaar.nl/lists/cryptojacking_campaign.list.txt | tail

thenationalpastimemuseum.com

tigase.net

unitedbike.com

vini.pf

vivicariolano.portaldoholanda.com.br

widescreen-centre.co.uk

wildfor.life

2plus-misenal-v2.rtvc.gov.co

www.trade.gov.mm

nfrc.ucla.edu

Use the Pi-hole admin web GUI to add URL lists.

It will automatically pull all the blocked domains into Pi-hole.

The below link should get you there:

Thanks it worked

@deHakkelaar the spreadsheet has 5 pages. Does your list contain all of them?

Compromised Drupal properties (Source, Malwarebytes blog)

Concatenated all on the pastebin list.

Now it does:

pi@noads:~ $ curl -s https://dehakkelaar.nl/lists/cryptojacking_campaign.list.txt | wc -l

1082

And I moved shop to a VM thats got LetsEncrypt SSL:

https://dehakkelaar.nl/lists/cryptojacking_campaign.list.txt

Better test the URL in a browser first as my DNS change might not have propagated yet.

If you get a "Secure Connection Failed" messages or similar in the browser you need to wait a bit (DNS record TTL = 12 hours).

But as soon as you can load that https link in a browser, you can add it as a list in Pi-hole, and remove the old http one of course.

I'll keep the old http one running for a while as well.

The crontab became too long so I scripted it and put that in a crontab:

#!/bin/bash

curl -s 'https://docs.google.com/spreadsheets/d/14TWw0lf2x6y8ji5Zd7zv9sIIVixU33irCM-i9CIrmo4/export?format=csv&id=14TWw0lf2x6y8ji5Zd7zv9sIIVixU33irCM-i9CIrmo4&gid=0' | awk -F , '{print $1}' | tail -n +2 | tee /var/www/html/lists/cryptojacking_campaign.list.txt

curl -s 'https://docs.google.com/spreadsheets/d/14TWw0lf2x6y8ji5Zd7zv9sIIVixU33irCM-i9CIrmo4/export?format=csv&id=14TWw0lf2x6y8ji5Zd7zv9sIIVixU33irCM-i9CIrmo4&gid=1317599353' | awk -F , '{print $1}' | tail -n +2 | tee -a /var/www/html/lists/cryptojacking_campaign.list.txt

curl -s 'https://docs.google.com/spreadsheets/d/14TWw0lf2x6y8ji5Zd7zv9sIIVixU33irCM-i9CIrmo4/export?format=csv&id=14TWw0lf2x6y8ji5Zd7zv9sIIVixU33irCM-i9CIrmo4&gid=1874348923' | awk -F , '{print $1}' | tail -n +2 | tee -a /var/www/html/lists/cryptojacking_campaign.list.txt

curl -s 'https://docs.google.com/spreadsheets/d/14TWw0lf2x6y8ji5Zd7zv9sIIVixU33irCM-i9CIrmo4/export?format=csv&id=14TWw0lf2x6y8ji5Zd7zv9sIIVixU33irCM-i9CIrmo4&gid=1200297433' | awk -F , '{print $1}' | tail -n +2 | tee -a /var/www/html/lists/cryptojacking_campaign.list.txt

curl -s 'https://docs.google.com/spreadsheets/d/14TWw0lf2x6y8ji5Zd7zv9sIIVixU33irCM-i9CIrmo4/export?format=csv&id=14TWw0lf2x6y8ji5Zd7zv9sIIVixU33irCM-i9CIrmo4&gid=820026181' | awk -F , '{print $1}' | awk -F '//' '{print$2}' | grep -v '^$' | tail -n +2 | tee -a /var/www/html/lists/cryptojacking_campaign.list.txt

I've been using your list (http://dehakkelaar.nl/lists/cryptojacking_campaign.list.txt) but now it fails.

Would you mind to fix it?

Thanks.

I did some efforts but it seems something has changed.

I've been using below method/answer since I started the list to get an CSV export from the source Google sheet:

https://support.google.com/docs/thread/40044224?hl=en

But getting a redirect message now to a non existing document:

pi@ph5:~/tmp $ curl -s -k 'https://docs.google.com/spreadsheets/d/14TWw0lf2x6y8ji5Zd7zv9sIIVixU33irCM-i9CIrmo4/export?format=csv&gid=0'

<HTML>

<HEAD>

<TITLE>Temporary Redirect</TITLE>

</HEAD>

<BODY BGCOLOR="#FFFFFF" TEXT="#000000">

<H1>Temporary Redirect</H1>

The document has moved <A HREF="https://doc-10-14-sheets.googleusercontent.com/export/l5l039s6ni5uumqbsj9o11lmdc/143vj9f65ajg5isrep70777h1k/1593828090000/115719355738033081065/*/14TWw0lf2x6y8ji5Zd7zv9sIIVixU33irCM-i9CIrmo4?format=csv&gid=0">here</A>.

</BODY>

</HTML>

I've asked badpackets.net if they made any changes.

But for now I dont know how to get the sheet's exported.

So I've taken down the list for the duration.

Ps. I did tidy up the script a bit to make it more readable.

Also "issues" can be reported on Github:

![]() Sorry didn't know you host it on github - filed a issue there.

Sorry didn't know you host it on github - filed a issue there.

That was easy fix with the help of @yubiuser.

List is up again:

pi@ph5:~ $ pihole -g

[..]

[i] Target: https://dehakkelaar.nl/lists/cryptojacking_campaign.list.txt

[✓] Status: Retrieval successful

[i] Received 853 domains

[..]